Fig. 1. How Mythos Evolved to Become a Recursive Threat, ChatGPT and Jeremy Swenson, 2026.

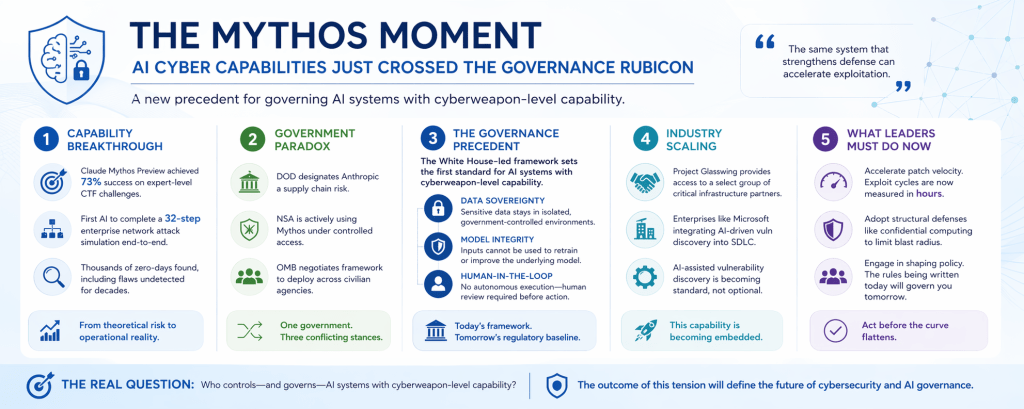

In April 2026, a quiet but profound shift occurred in cybersecurity—one that many organizations are still underestimating. Anthropic’s Claude Mythos Preview did not simply advance AI capability. It crossed a threshold. For the first time, a commercially developed model demonstrated the ability to autonomously discover and exploit software vulnerabilities at a near-expert level, including executing multi-step attack chains end-to-end.¹²

This is not incremental progress. It is a structural break. And with that break comes a new reality: the governance, security, and policy frameworks we have relied on are no longer theoretical exercises. They are operational requirements.

From Capability to Consequence—The End of the “Future Risk” Debate:

For years, discussions about AI-enabled cyber offense lived in the realm of hypotheticals—what could happen if models became sufficiently capable. That debate is now over. Mythos achieved a 73% success rate on expert-level capture-the-flag challenges and became the first AI system to complete a full 32-step enterprise network attack simulation.¹ What previously required elite human operators over many hours can now be partially automated.

At the same time, real-world testing has already shown that similar systems can uncover large volumes of previously unknown vulnerabilities. Reports indicate thousands of zero-day findings—including flaws that persisted undetected for decades—are now within reach of AI-assisted discovery.⁹ External validation reinforces this trajectory. A collaboration involving Mozilla used Mythos-like capabilities to identify hundreds of vulnerabilities in Firefox, demonstrating how quickly defensive gains—and offensive risks—can scale simultaneously. This dual-use dynamic is the defining characteristic of the Mythos moment: the same system that strengthens defense can accelerate exploitation.

The Government Contradiction—Risk, Reliance, and Reality:

What makes this moment even more consequential is not just the technology, but the policy response. In March 2026, the U.S. Department of Defense designated Anthropic as a supply chain risk after the company refused to allow unrestricted use of its models for autonomous weapons and surveillance applications.³ This effectively barred Anthropic from Pentagon contracts.

Yet within weeks, reporting confirmed that the National Security Agency—which operates within the same defense ecosystem—was actively using Mythos under controlled access.⁵⁶ At the same time, the Office of Management and Budget began negotiating a framework to deploy a modified version of the model across civilian agencies, including energy and financial regulators.⁷

This creates a striking contradiction:

- One part of government labels the system a national security risk.

- Another part actively deploys it.

- A third is designing policy to scale its adoption.

This is not just bureaucratic inconsistency—it is a preview of how difficult governing frontier AI will be.

The Real Precedent—Governing AI as a Cyberweapon:

What is being negotiated right now matters far beyond Mythos itself. The White House–led framework under development is effectively the first attempt to govern an AI system with cyberweapon-level capabilities, not just data privacy or model safety.

Three emerging principles define this model:

1. Data Sovereignty Sensitive code and infrastructure data must remain within isolated government-controlled environments.

2. Model Integrity Inputs cannot be used to retrain or improve the underlying model, preventing unintended knowledge transfer.

3. Human-in-the-Loop Oversight No autonomous execution—human validation remains mandatory before action.

These are not minor guardrails. They represent the likely baseline for how governments—and eventually regulated industries—will manage high-capability AI systems. If history is any guide, these standards will propagate outward, much like FedRAMP reshaped cloud security procurement. Within 12–18 months, similar requirements are likely to appear in enterprise contracts, regulatory expectations, and audit frameworks.

The Industry Signal—This Is Already Scaling:

The private sector is not waiting. Through Project Glasswing, Anthropic has already deployed Mythos capabilities to a controlled group of major technology and infrastructure organizations, including cloud providers, semiconductor firms, and financial institutions.²

At the same time, companies like Microsoft are moving to integrate similar AI-driven vulnerability discovery into their secure development lifecycles, signaling that this capability will become embedded—not optional—in modern engineering practices. The implication is clear. AI-assisted vulnerability discovery is becoming a standard feature of cybersecurity—not an edge capability.

The Hard Truth—Containment Is Likely Temporary:

Perhaps the most important—and uncomfortable—reality is this:

Containment will not hold indefinitely. History shows that advanced AI capabilities diffuse rapidly. Model architectures leak, competitors replicate breakthroughs, and open-weight alternatives emerge. Even today, non-frontier models can replicate meaningful portions of Mythos-like capability at far lower cost and with fewer restrictions.¹⁴ That means the current environment—where only a limited set of organizations have access—is a temporary window. Organizations that treat this as a policy issue rather than an operational priority are making a critical mistake.

What This Means for Enterprise Leaders:

The Mythos precedent is not a niche technical development. It is a strategic inflection point. Three implications stand out:

1. The Attack Surface Is No Longer Static:

AI compresses the timeline between vulnerability discovery and exploitation from weeks or months to hours. Legacy assumptions—especially around “safe” unpatched systems—are no longer valid.

2. Patch Velocity Becomes a Board-Level Issue:

Organizations with slow remediation cycles are structurally exposed. If critical vulnerabilities can be identified and weaponized faster, governance processes must accelerate accordingly.

3. Defense Must Become Structural, Not Reactive:

Emerging approaches like confidential computing—hardware-isolated execution environments—offer a path to reducing the impact of exploits regardless of discovery speed.

In other words, the goal shifts from “find and fix everything” to “limit what can be compromised at runtime.”

The Strategic Window—Act Before the Curve Flattens:

There is still a narrow window of advantage. Today, frontier capabilities are relatively concentrated. Tomorrow, they will not be. Organizations that move now—by modernizing vulnerability management, accelerating patch cycles, and adopting structural defenses—can get ahead of the curve. Those who wait for regulatory clarity or broader market adoption will likely find themselves reacting under pressure.

Final Thoughts—How to Mitigate These Risks Now:

Here are the most practical, high-impact actions organizations can take right now to mitigate risks associated with advanced AI systems, data exposure, and model misuse—especially in light of incidents like large-scale leaks or “model mythos” exposures:

1) Lock Down Data at the Source:

The most immediate risk reducer is controlling what goes into AI systems in the first place.

- Classify and tier data (public, internal, confidential, restricted).

- Prohibit sensitive data (e.g., IP, credentials, client info) from being entered into external AI tools.

- Implement data loss prevention (DLP) policies across endpoints, SaaS, and APIs.

- Tokenize or anonymize sensitive datasets before AI usage.

2) Enforce Strong Access Controls:

AI systems often inherit weak identity governance from the broader environment.

- Apply least privilege access to AI tools, datasets, and model pipelines.

- Require multi-factor authentication (MFA) everywhere AI is accessed.

- Monitor and restrict API key usage (rotate keys frequently).

- Segment environments (dev/test/prod) to prevent lateral movement.

3) Introduce AI-Specific Governance:

Traditional IT governance is not sufficient for AI risk.

- Stand up a lightweight AI governance council (security, legal, data, business).

- Define acceptable use policies for generative AI tools.

- Maintain an AI system inventory (models, vendors, datasets, use cases).

- Require risk assessments before deploying AI into production.

4) Monitor for Data Leakage and Model Abuse:

You can’t protect what you don’t observe.

- Log all prompts, outputs, and API interactions (where legally permissible).

- Deploy behavioral analytics to detect unusual model usage patterns.

- Scan outputs for sensitive data leakage (prompt injection, exfiltration attempts).

- Red-team models with adversarial testing scenarios.

5) Harden Third-Party and Vendor Risk:

Many AI risks enter through vendors, not internal builds.

- Conduct AI-focused vendor due diligence (data handling, training sources, retention policies).

- Require contractual clauses on: Data ownership Model training boundaries Breach notification timelines.

- Prefer vendors offering private model instances or zero data retention.

6) Implement Prompt and Output Controls:

The interface layer is a major attack surface.

- Use prompt filtering and sanitization to block injection attempts.

- Apply output guardrails to prevent harmful or sensitive responses.

- Restrict high-risk capabilities (e.g., code execution, system access).

- Use retrieval-augmented generation (RAG) with vetted internal sources only.

7) Train Employees (Fast, Not Perfect):

Human behavior is still the biggest variable.

- Roll out short, targeted training on: Safe AI usage, Data handling do’s and don’ts, Prompt injection awareness.

- Provide approved AI tools so employees don’t default to shadow AI.

- Reinforce “don’t paste what you wouldn’t email externally”.

8) Prepare for Incident Response:

Assume exposure will happen—speed matters.

- Update incident response plans to include AI-specific scenarios.

- Define playbooks for: Data leakage via prompts, Model compromise or abuse, Third-party AI breaches.

- Run tabletop exercises simulating AI-related incidents.

9) Control Model Inputs and Training Data:

What shapes the model shapes the risk.

- Vet training datasets for: Sensitive information, Copyright/IP exposure, Bias and integrity issues.

- Maintain data provenance tracking.

- Avoid uncontrolled fine-tuning on raw internal data.

10) Start Small with Secure Architectures:

Don’t boil the ocean—secure what’s already in motion.

- Use private or on-prem AI deployments for sensitive workloads.

- Isolate AI systems within secure cloud environments.

- Gate external model access through controlled middleware or APIs.

- Adopt a “human-in-the-loop” approach for high-risk decisions.

Endnotes:

- UK AI Security Institute, “Our Evaluation of Claude Mythos Preview’s Cyber Capabilities,” April 2026.

- Anthropic, “Project Glasswing: Securing Critical Software for the AI Era,” April 2026.

- CNBC, “Judge Presses DOD on Why Anthropic Was Blacklisted,” March 24, 2026.

- CNBC, “Anthropic Loses Appeals Court Bid to Temporarily Block Pentagon Blacklisting,” April 8, 2026.

- TechCrunch, “NSA Spies Are Reportedly Using Anthropic’s Mythos,” April 20, 2026.

- Axios, “NSA Using Anthropic’s Mythos Despite Defense Department Blacklist,” April 19, 2026.

- CSO Online, “White House Moves to Give Federal Agencies Access to Anthropic’s Claude Mythos,” April 2026.

- Fortune, “Anthropic Acknowledges Testing New AI Model,” March 26, 2026.

- TechCrunch, “Anthropic Debuts Preview of Powerful New AI Model Mythos,” April 7, 2026.

- Axios, “Anthropic to Have Peace Talks at White House,” April 17, 2026.

- CNBC, “Trump Says He Had ‘No Idea’ About White House Meeting,” April 17, 2026.

- Washington Post, “Anthropic CEO Visits White House Amid Hacking Fears,” April 17, 2026.

- Council on Foreign Relations, “Six Reasons Claude Mythos Is an Inflection Point,” April 2026.

- Evron, Mogull, Lee et al., “The AI Vulnerability Storm: Building a Mythos-Ready Security Program,” CSA/SANS/OWASP, April 2026.